How We Built an Autonomous Coding Agent for Repetitive Engineering Tasks

Every engineering team has a backlog of well-defined, repetitive tasks that consume real time but require little judgment. We built an autonomous coding agent - we call it our 'elf' - to handle that work , keeping human engineers in the review loop at every step. Here's how we built it - and how it built itself.

Abhishek Chaturvedi, senior principal engineer, also contributed to this blog

Every engineering organization has a backlog of tedious work that few jump out of their seats to tackle. Each task takes 20-30 minutes, requires minimal engineering judgment, and still needs a human to clone a repo, make the changes, open a pull request, and shepherd it through review.

So, we built a coding tool we call our autonomous coding elf to take that work off engineers’ plates. A human engineer describes a change in plain English, and our autonomous coding elf independently writes the code, runs tests, and submits a pull request for human review. It acts as our partner, amplifying productivity.

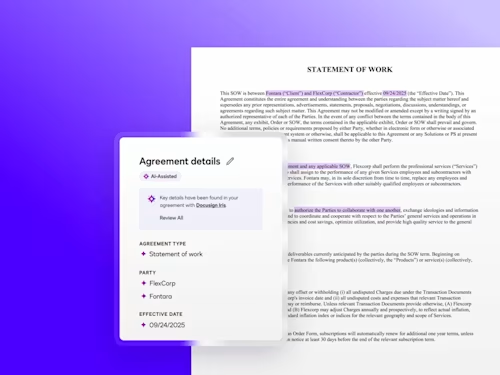

The elf's simplified workflow for turning natural language descriptions into code

The problem by the numbers

The idea started as a hackathon project in 2025. During an Azure migration refactoring effort last year, engineers were spending significant time on trivial changes.

So we asked: What if we could describe a task in a Jira ticket and have an AI engineer just implement it?

When we looked at our pull request (PR) data across all of Docusign’s code repositories, the case for a tool like this became obvious. A large number of our PRs are single-file changes with fewer than 10 lines of code – well-defined, repetitive edits that don’t require deep engineering judgment.

How it turns a ticket into a pull request

Our autonomous coding elf’s workflow is intentionally simple. An engineer describes the change they need through a Slack workflow, Jira ticket, or direct request in-app, and the tool takes it from there. It reads the task description, writes the code, runs the automated test suite, and submits a PR. No dev machine required on the engineer’s end. Just VPN access to review the result.

Caption: Inside the elf: A high-level look at the architecture, featuring the Agent Gateway API and its integration with GitHub, Jira, and Slack

Elf anatomy: how we built it

We built the autonomous coding elf using a blend of internal infrastructure and GenAI models.

The tech stack

Platform: Built on Docusign’s Microservices (MSF) infrastructure.

Integration: Deeply integrated with GitHub, Jira, Slack to automate the coding workflow end-to-end.

The “brains" (multi-model strategy)

The coding elf uses a suite of top models, including Claude, Gemini, and Codex. It routes tasks to the most suitable model based on complexity while letting users configure their preferred model manually.

The collaborative loop

Unlike a standard code generator, the elf was built to be feedback-aware:

Self-monitoring: It monitors its own PRs after they are submitted.

Iterative refinement: If a human reviewer leaves a comment on the PR, the elf analyzes that feedback, adjusts the code, and pushes a new iteration automatically.

Testing: It is designed to write its own unit tests for non-trivial changes to ensure code quality before a human even sees it.

Self-evolving codebase

Once the MVP was functional, our engineers began using the elf's own workflow to improve the tool itself: bug fixes, new feature development, configuration changes, even modifications to the Dockerfile it runs in.

The same interface engineers use to submit work to the tool was used to submit work on the tool. This was a practical validation of the tool's maturity: if the elf can reliably maintain and extend its own codebase, that's a meaningful signal about the quality of code it produces and the robustness of its feedback loop. It became rare for us to write code on it manually rather than letting it handle its own maintenance.

Elf in action: days to minutes

One of the clearest early wins has been infrastructure configuration work. Building a new shard can require modifying 10 or more configuration files and cross-referencing values across them. The work isn’t mentally demanding, but it requires careful tracking and tribal knowledge about which values go where. Engineers also have to wait on build pipeline feedback between steps, making the whole process feel slower than the underlying work warrants.

Before we built our internal autonomous coding elf, this process took roughly a week of engineer time. With our tool, the team provided a sample commit containing the required changes and let it work. In about 15 minutes, the autonomous coding elf correctly modified 10 of 12 files on its first pass. That’s roughly 90% accuracy out of the gate, and a single follow-up comment would have resolved the remaining two files.

Service onboarding is another example. When a team needs to integrate with an internal platform, the process involves modifying a predictable set of configuration files and submitting a PR. It’s 20-30 minutes of an engineer’s time, every time. The autonomous coding elf handles this end to end while keeping a human in the review loop.

The tool has scaled faster than we expected. In just two months, the share of PRs generated by the autonomous coding elf has grown from less than 1% to over 7%.

Rolling it out carefully

A dedicated Slack app makes it accessible from anywhere, including on mobile phones, which has lowered the barrier considerably compared to the initial interface-only experience

This fits the broader philosophy of Docusign’s AI engineering program, which we think of in four phases: Try, Use, Trust, Rely. We’ve moved past trying AI tools and are well into using them.

This approach also aligns with Docusign’s AI Innovation Principles: human review is required on every PR, AI-generated code is labeled transparently, and quality gates are in place before anything merges.

What’s next

In the near term, the tool will continue automating well-defined, repetitive tasks.

As we scale its autonomy, we expect it to move beyond simple tickets and towards handling feature work and fixes for any stakeholder, proving it can navigate our codebase and deliver correct PRs with minimal guidance. We want it to act as the technical bridge for our full team, empowering non-engineers to autonomously implement their own requirements and keep the product moving at full speed.

Want to meet our autonomous coding elf and push the boundaries around AI’s ability to streamline how you work? Explore our open engineering roles here.

As a software engineering leader at Docusign, Ahmed drives critical infrastructure initiatives, including the company's database cloud migration project. Drawing on his extensive background building large-scale systems at major cloud providers like AWS and Oracle Cloud Infrastructure (OCI), Ahmed focuses on defining internal processes that raise the bar for operational excellence across the organization. He works closely with internal engineering teams to ensure Docusign's systems maintain the highest standards of reliability and performance.

Related posts

Docusign IAM is the agreement platform your business needs