Featured topic

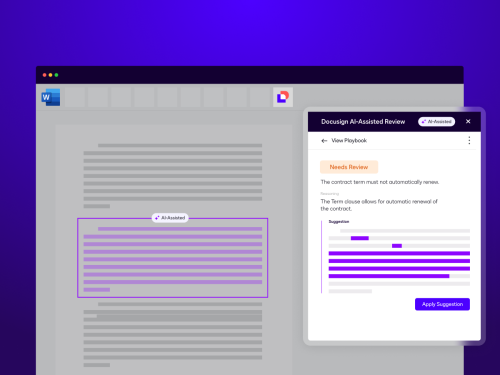

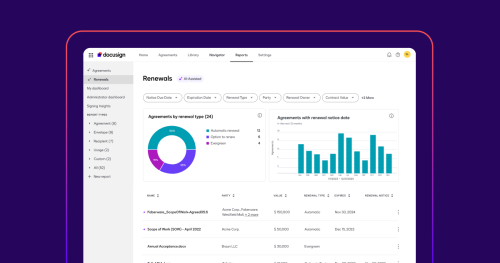

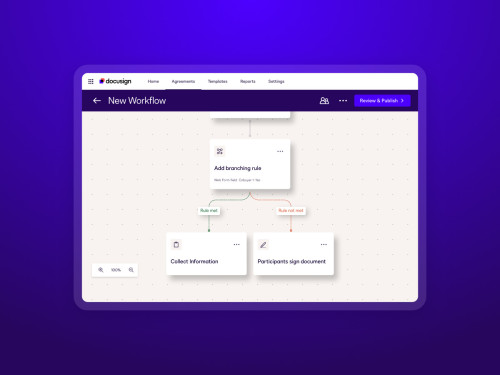

Intelligent Agreement Management

See how Intelligent Agreement Management helps organizations of all kinds create smarter agreements, commit to them more efficiently, and then manage them to unleash and realize their full value.

Discover how organizations grow with Docusign

Latest posts

Agreement strategies and insights - delivered right to your inbox

Discover what's new with Docusign IAM or start with eSignature for free